Tech History

-

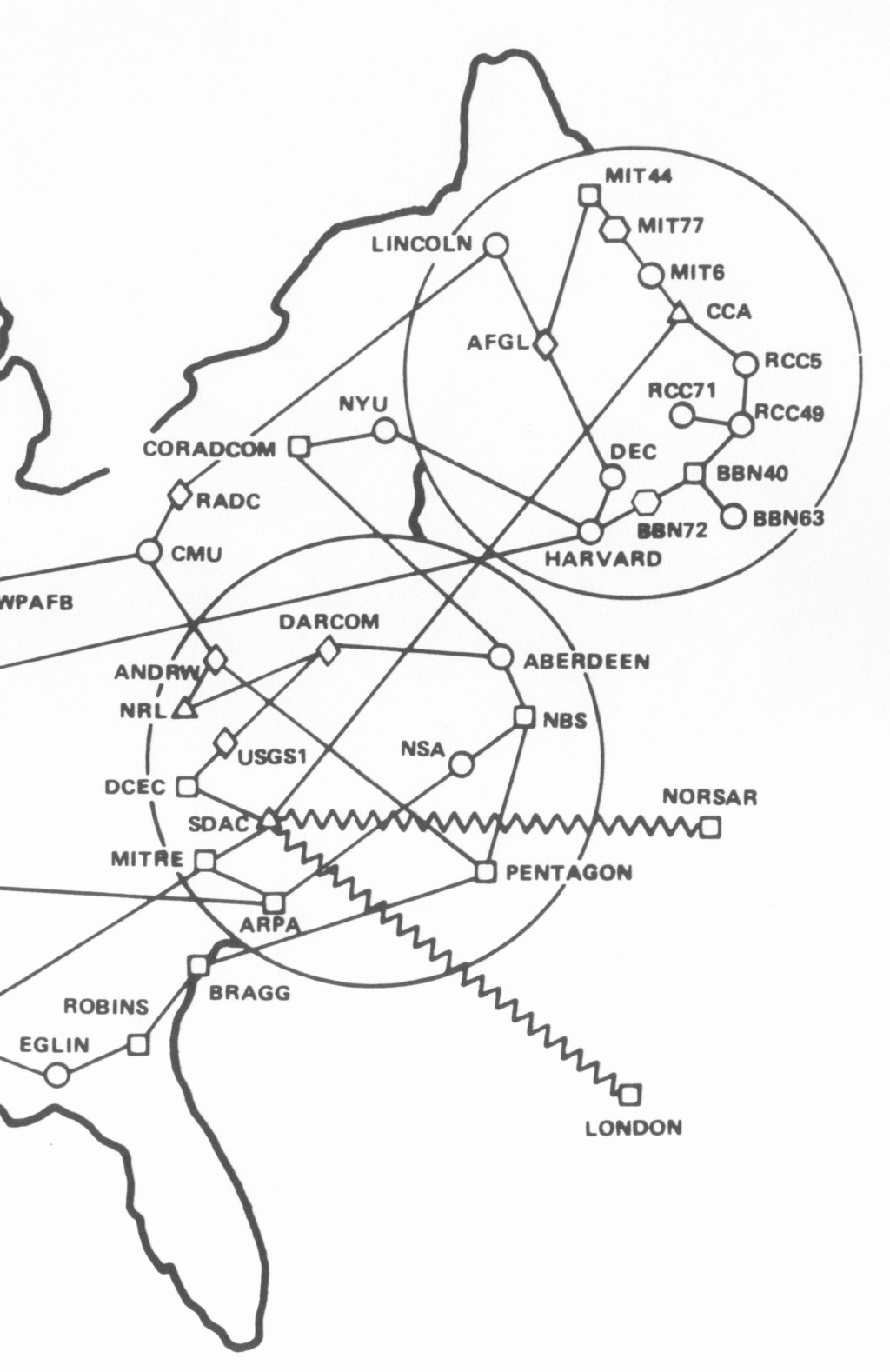

ARPANET Maps

Here is a set of geographical maps of the ARPANET in the 1970s and 1980s. There…

-

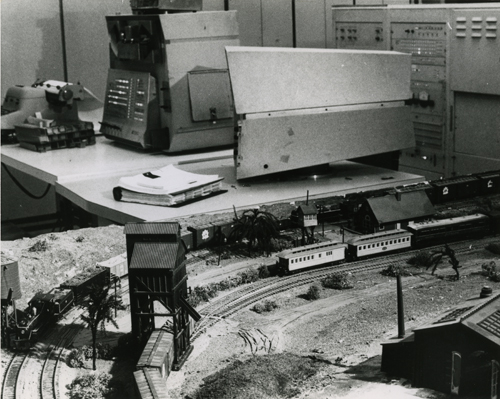

PDP 1s and Model Trains

Elsewhere on the Internet last week, people were solving a mystery about a PDP-1 running a…

-

Two Longs and a Short

By Dick Pence This story appeared in The Washington Post in 1991, shortly after a computer…

-

A Yank at Bletchley Park

A friend and colleague introduced me to a 94-year-old gentleman with a rare tale to tell. John…

-

Which cables to keep, which to discard?

CNET recently published a list of cables to keep and cables to discard. I like to…

-

Pragmatic Security: the history of the Visa card

I’ve been looking at the evolution of electronic funds transfer (EFT) and payment systems recently. My…

-

Files using Classic FORTH

(circa 1970-85, maybe later) The Forth programming system was developed in the late 1960s by Chuck Moore.…

You must be logged in to post a comment.