I was online chatting at a web site to repair my lawn tractor. Once I finished, I said, “So you’re a chatbot. Cool.”

I’m sure I was talking to a chatbot program and not a human. The reply was a brief but emphatic “No!”

I’m not sure how to interpret that. Will a company be honest about whether you are talking to a human or a chatbot? Should it? Must it?

A chatbot is a conversational computer program that handles “chat” interactions. There are marketing reasons for a company to insist that all customer service is via live humans. There are probably strong bottom-line reasons to use chatbots wherever possible. It has to be cheaper and easier to control.

I have no objection to chatbots. I find them a lot easier to use than “Press 1 for awful hold music” phone menu systems.

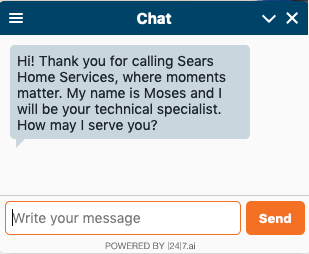

A company should feel obliged to be honest about using chatbots. The “Chat” window shown above was similar to the one I used, and it belongs to “[24]7.AI,” a chatbot vendor.

Some vendors intermix chatbot interactions with real human interactions, but [24]7 is not usually one of them, according to Forrester Research. This can also be a source of ethical pain: does the company need to signal when the listener changes? Arguably so, since chat conversations are normally attributed to a named individual (i.e. “Moses” in the above chat).

Detecting a Chatbot: A Digression

Chatbots use “artificial intelligence” (AI) techniques to interact effectively in human conversations. Basic techniques date back to about 1964: Joseph Weizenbaum’s “Eliza/Doctor” program. A friend wrote a variant at Boston University for our RAX system in the 1970s. It was a source of much entertainment.

How can you tell if you’re talking to a chatbot or not? Curiously, this is a restatement of the Turing Test, a classic AI thought problem. Turing asked the question, “How do we tell if a computer is intelligent?” He answered it with the Turing Test: if you have a lengthy conversation via chat, and you can’t tell the difference between a human and a computer, then the computer is intelligent.

Here are some tricks I’d suggest:

- Repeat the same question several times and see if the answer is ‘too identical’

- Can you talk in circles without the chatter getting impatient?

- Does the chatter indicate any awareness of the world outside the specific goal you’re trying to achieve? This is subtle. Bots won’t understand puns, cultural allusions, or metaphors.

I might not always tell a bot from a human, but I can tell a bot from a smart human. Some humans are going to behave like bots.

Reference

Ian Jacobs, “Conversational AI For Customer Service, Q2 2019,” Forrester Research, 11 June 2019.

Excerpt of my chatbot transcript

Visitor (17:07:21 GMT) : OK. So you're a chatbot.

Visitor (17:07:24 GMT) : cool

Maverick White (17:07:32 GMT) : No! Are you the homeowner?

Visitor (17:07:51 GMT) : i don't dare say or you will try to sell me something

Response

[…] *** This is a Security Bloggers Network syndicated blog from Cryptosmith authored by cryptosmith. Read the original post at: https://cryptosmith.com/2019/09/23/ethics-and-chatbots/ […]

LikeLike